In today’s fast evolving enterprise AI landscape, organizations are increasingly focused on how models deliver real-time predictions at scale. This shift has made AI Inference Strategy a core pillar of digital transformation. Whether businesses choose cloud environments or on-prem infrastructure, the decision directly shapes performance, cost efficiency, and long-term scalability. A well-defined AI Inference Strategy ensures that machine learning models are not just trained effectively but also deployed in a way that aligns with business objectives.

Understanding the importance of an AI Inference Strategy is critical because inference is where AI creates actual business value. It is the stage where models interact with real-world data, generate predictions, and support decision making. Without a strong AI Inference Strategy, even the most advanced models fail to deliver consistent outcomes.

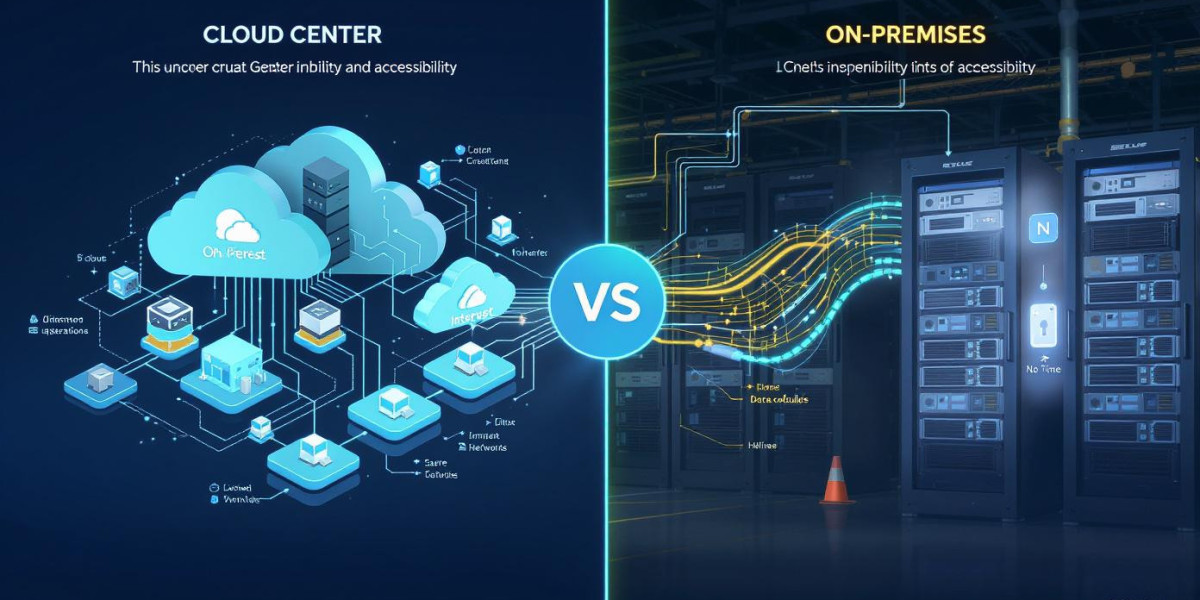

Understanding Cloud-Based AI Inference Strategy

A cloud-based AI Inference Strategy focuses on deploying machine learning models on scalable cloud infrastructure. This approach allows organizations to dynamically allocate resources based on demand. One of the biggest advantages of a cloud-driven AI Inference Strategy is elasticity. Businesses can scale up during peak loads and scale down when demand decreases.

Another key benefit of a cloud-oriented AI Inference Strategy is faster deployment. Teams can push updates and new models without worrying about hardware constraints. This reduces operational overhead and improves time to market. However, dependency on network connectivity and potential latency issues can affect performance in real-time applications.

Despite these challenges, many enterprises adopt a cloud AI Inference Strategy because it reduces upfront infrastructure investment. It also integrates easily with modern data pipelines and AI services.

On-Prem AI Inference Strategy and Enterprise Control

An on-prem AI Inference Strategy is built around local infrastructure where organizations maintain full control over data and processing. This model is often preferred in industries with strict compliance requirements such as finance and healthcare.

A strong on-prem AI Inference Strategy offers predictable performance because it eliminates external network dependency. Latency is often lower, making it suitable for real-time systems like fraud detection or industrial automation. Security is another major advantage since sensitive data never leaves the internal network.

However, maintaining an on-prem AI Inference Strategy requires significant investment in hardware, maintenance, and technical expertise. Scaling can also be slower compared to cloud environments.

Cost Dynamics in AI Inference Strategy

Cost plays a major role in selecting an AI Inference Strategy. Cloud solutions often follow a pay-as-you-go model, which can be cost-effective for fluctuating workloads. On the other hand, an on-prem AI Inference Strategy involves high upfront capital expenditure but may reduce long-term operational costs for stable workloads.

Organizations must evaluate usage patterns before deciding their AI Inference Strategy. For example, startups may prefer cloud models, while large enterprises with stable traffic might benefit from on-prem infrastructure.

Latency and Performance Considerations

Latency is one of the most critical factors in any AI Inference Strategy. Cloud-based systems may introduce delays due to network transmission, while on-prem systems offer faster response times.

For applications such as autonomous systems or real-time analytics, optimizing AI Inference Strategy for low latency becomes essential. Businesses often adopt hybrid models to balance performance and scalability.

Security and Compliance in AI Inference Strategy

Security requirements heavily influence AI Inference Strategy decisions. Cloud providers offer strong security frameworks, but some organizations still prefer on-prem systems for complete data control.

A well-structured AI Inference Strategy must comply with regulations such as data privacy laws and industry-specific standards. This ensures that sensitive data is processed securely without violating compliance requirements.

Hybrid AI Inference Strategy Approach

Many modern enterprises are now adopting a hybrid AI Inference Strategy that combines cloud and on-prem capabilities. This approach allows businesses to process sensitive workloads internally while leveraging the cloud for scalable inference tasks.

A hybrid AI Inference Strategy provides flexibility and resilience. It also helps optimize costs by distributing workloads efficiently. Organizations can dynamically adjust their AI Inference Strategy based on performance requirements and data sensitivity.

Scalability and Future Readiness

Scalability is a defining factor in any AI Inference Strategy. Cloud systems naturally support horizontal scaling, making them ideal for rapidly growing workloads. On-prem systems require additional hardware provisioning, which can slow down scaling efforts.

A future-ready AI Inference Strategy should consider long-term growth, model complexity, and evolving data needs. Businesses investing in scalable infrastructure are better positioned to handle next-generation AI workloads.

Real-World Use Cases of AI Inference Strategy

Different industries apply AI Inference Strategy in unique ways. In retail, it supports recommendation engines and personalized experiences. In healthcare, it powers diagnostic systems and predictive analytics. In finance, it enables fraud detection and risk scoring.

Each use case requires a tailored AI Inference Strategy that balances latency, accuracy, and cost. For example, high-frequency trading systems demand ultra-low latency inference, making on-prem solutions more suitable.

Decision Framework for AI Inference Strategy

Choosing the right AI Inference Strategy requires evaluating multiple factors including workload type, compliance requirements, cost structure, and scalability needs. Organizations should also assess technical expertise and infrastructure readiness before finalizing their approach.

A structured AI Inference Strategy decision framework helps businesses avoid inefficiencies and ensures optimal performance across AI systems. It also supports better alignment between IT infrastructure and business goals.

Important Considerations for AI Inference Strategy Implementation

When implementing an AI Inference Strategy, organizations must focus on monitoring, optimization, and continuous improvement. Model performance can degrade over time, making regular updates essential.

Additionally, integrating observability tools into the AI Inference Strategy helps track latency, accuracy, and resource usage. This ensures that systems remain efficient and reliable.

Security updates, workload balancing, and infrastructure tuning are also essential components of a sustainable AI Inference Strategy.

At BusinessInfoPro, we equip entrepreneurs, small business owners, and professionals with practical insights, proven strategies, and essential tools to drive growth. By breaking down complex concepts in business, marketing, and operations, we transform challenges into clear opportunities, helping you confidently navigate today’s fast-paced market. Your success is at the heart of what we do because as you thrive, so do we.